Frontier AI Thinking Explained

I was curious to learn about how frontier AI models "think", so I asked Gemini to explain it to me.

Frontier models perform something called autoregressive inference. This is a process where the model takes in the user's prompt and generates a response token, then the model prompts itself with the user's prompt and the generated response token, and generates another response token, and so on until the model generates a final response. All of this "thinking" happens in hidden layers of the model, so the user typically does not see the full thinking process.

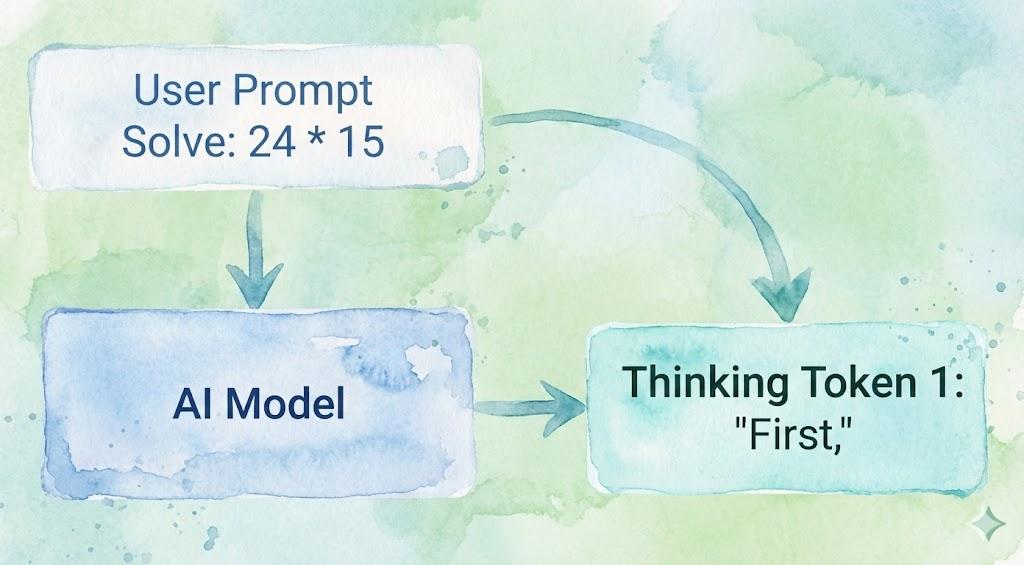

Step 1: The Initial Prompt

The process begins when the user sends a prompt to the model. The model then takes the user's input and generates the very first "reasoning token" of its hidden thought process.

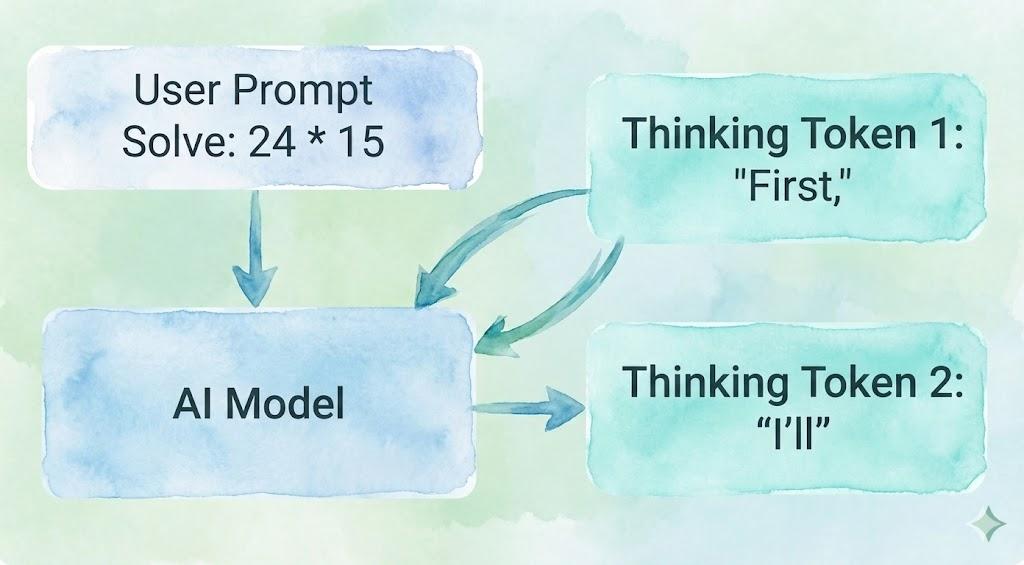

Step 2: The Autoregressive Loop

Gemini described this step as the core of the thinking process. In this step, the model takes its original input and the thinking token it just generated and feeds them back into itself, and predicts the next thinking token. This cycle repeats thounds of times, building a "chain of thought".

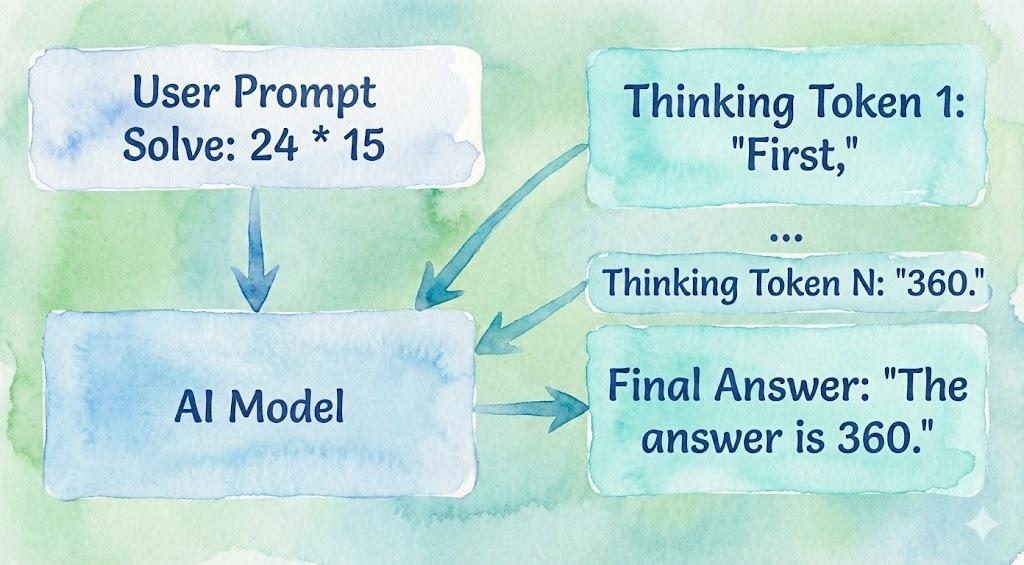

Step 3: The Final Output

After generating sufficient thinking tokens to solve the problem, the model completes its internal monologue. The model then uses that entire sequence of thought as context to generate the final output, which is the answer the user sees.

Gemini went on to explain why "thinking" must be autoregressive.

This structure is critical for reasoning (the "thinking" process we discussed) because logic is inherently sequential.

- Dependency: You cannot conclude Therefore, \(X\) is true until you have established the premise If \(Y\), then \(X\).

- Correction: Because the model feeds its own output back into itself, it can "read" its own thought. If it generates "Wait, that calculation is wrong," that error-checking token becomes part of the history, forcing the model to pivot its strategy in the next token.

If the model were not autoregressive, it would have to generate the premise and the conclusion simultaneously, meaning the conclusion couldn't actually be derived from the premise—it would just be a hallucinated correlation.

Kind of makes sense. I won't claim to fully understand that explanation, but it does kind of make sense!

-Kairos Anamnesis

Comments? Send me an email! kairosanamnesis@gmail.com